A few bad apples: the vocal, tiny (1%) minority starting most wars on Reddit

Can social media platforms do anything about online conflict and abuse without restricting free speech?

Two new, unrelated accounts published in the past few days studying the weirdest and wildest large social media platform (Reddit) may help us get a bit closer to an answer.

Bans, censorship and hate speech

For years, New Yorker magazine contributing editor Andrew Marantz tried to get inside those social media “decision rooms,” and witness first-hand how executives make tough calls about bans, censorship and hate speech.

Just how that process plays out on each platform becoming increasingly important. As more and more of people’s private, professional and social life moves online, so do malicious actors aiming to influence, confuse, persuade, disturb and harass.

Twitter, Snapchat and Facebook were not particularly interested in letting a reporter listen in to those conversations. But management at Reddit, the 4th largest website in the United States, decided to let him hang out at Reddit for a year.

The result is an in-depth, outstanding article, which you should go ahead and read. But Reddit’s approach to content moderation is well synthesized by this quote by Reddit co-founder & CEO Steven Huffman:

“I don’t think I’m going to leave the office one Friday and go, ‘Mission accomplished—we fixed the Internet.’ Every day, you keep visiting different parts of the site, opening this random door or that random door—‘What’s it like in here? Does this feel like a shitty place to be? No, people are generally having a good time, nobody’s hatching any evil plots, nobody’s crying. O.K., great.’ And you move on to the next room.”

The piece raises more questions than it gives answers, but if we pair it with a recent Stanford study about conflict on Reddit, we can begin to see how Reddit’s strategy might be a promising one.

Nipping conflicts in the bud

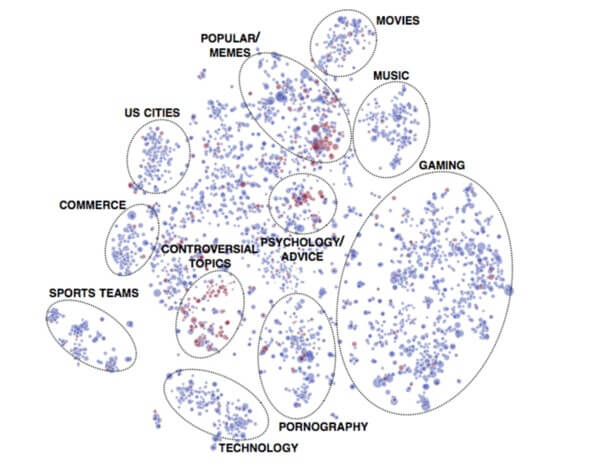

The study set out to “examine cases of intercommunity conflict ('wars' or 'raids'), where members of one Reddit community, called "subreddit", collectively mobilize to participate in or attack another community.”

The study is encouraging because it shows how it is a few small, vocal minorities who tend to start most of the fights online. Here are the main takeaways from the study (full paper here):

- “A small number of communities initiate most conflicts, with 1% of communities initiating 74% of all conflicts ....These communities attack other communities that are similar in topic but different in point of view.

- Conflicts are initiated by active community members but are carried out by less active users. It is usually highly active users that post hyperlinks to target communities, but it is more peripheral users who actually follow these links and participate in conflicts.

- Conflicts are marked by the formation of "echo-chambers"...."attackers" interact with "attackers" and "defenders" with "defenders"

- Conflicts have long-term adverse effects on the engagement of members of the target community, but these adverse effects are mitigated when the "defending" community members engage in heated, direct debates with the "attackers".

- Conflicts can be defended against when the attacked community directly engages with ('fights back') the attacking users.”

The early-warning system for conflicts

The authors of the study, researchers from the Computer Science & Linguistics department at Stanford, also created a “model to predict whether a link from one community to another is going to lead to conflict,” which “could be used to create a 'raid' early-warning system for moderators to inform them of a potential impending influx of toxic users.”

Huffman might consider looking into these techniques, in order to keep Reddit an interesting, humorous place where the mostly-non-belligerent 99% of the communities sometimes come together to create beautiful things.

On April Fools’ day last year, while most tech companies amused themselves with predictable marketing-driven parodies, Reddit set out to create, what Marantz calls “a genuine social experiment”.

“It was called r/Place, and it was a blank square, a thousand pixels by a thousand pixels. In the beginning, all million pixels were white. Once the experiment started, anyone could change a single pixel, anywhere on the grid, to one of sixteen colors. The only restriction was speed: the algorithm allowed each redditor to alter just one pixel every five minutes.”

The result is below (the .GIF is a heavy one, so let it load)

Marantz of course yesterday held an AMA –a crowdsourced interview that is one of the trademark formats on Reddit– discussing his article.