7 big ideas about researching images in social media

What I learnt from 'Picturing The Social', a conference from the Visual Social Media Lab

Each year, as data storage gets cheaper and bandwidth gets faster - the internet only gets more visual. For those of us who're social media researchers, that's a big problem.

Social media research tends to default to text analytics - but in a world of Instagram, Pinterest, memes and vloggers, our usual tools of keyword API searches, wordclouds & discourse analysis just don't work. Rooted in art and film theory, we've got a set of tools to make sense of images - but only one at a time. 1.8 billion images are posted to social media every day. How do you make sense of that?

Figuring some of this stuff out is why we at Pulsar have co-founded the Visual Social Media lab alongside researchers from the Universities of Sheffield, Manchester Metropolitan, Warwick & Wolverhampton. A couple of weeks ago I went with my colleague Francesco D'Orazio to the Lab's first conference, Picturing The Social.

It was fascinating. Here's 7 amazing ideas that changed how I think about understanding photos and images in social media.

7 big ideas about visual social media

1. We need to build new models for understanding visual social media

Lab director Farida Vis (@FlyGirlTwo) introduced the conference. Her key point was that the traditional media broadcast model analyses communication in 3 separate stages (production, text, reception) but this isn't sufficient for understanding visual, socially networked media.

Instead Farida proposed a 3-part model for analysing images online:

- Structures (e.g. the social media companies hosting our images, and how they shape what we see)

- Users (at every stage)

- Content (close analysis of the images themselves: remember that it's 'qualitative data on a mass scale')

2. Social media decays over time

Shaun Walker did a research project on viral videos & blogs – but by the time he got round to downloading & archiving those videos 2 months after they were created, 25% of the videos were gone. That's a huge challenge for research.

Social media analysis has to both capture what was there in the moment, and then make sure we can study it in that form. “A webpage rendered in 1996 technology looks very different than rendered in a modern browser today," he noted.

So the ideal social media research platform needs to be able to:

- Expand shortened URLs to help avoid linkrot

- Crawl URLs to capture the structure & HTML

- Store rendered URLs (visual screenshots)

3. We can’t assume we know what users want or intend with images

In particular, we can't assume that people are saving an image “for later” or for permanency given services like Snapchat. There's a generation gap here: older people tend to see images in terms of creating a historical record, or memory. That's not necessarily true for a 15 year-old taking 500 selfies a day because she's got near-infinite, free data storage and doesn't need to delete anything, ever.

4. The fantasy of the perfect archive

A question from the audience raises the question of "The dream that everything is archived, ready for the social scientist to study. The fantasy of perfect software that can be developed," so that everything is visible and analysable. She argued we should, "Think of them as haunted archives from the beginning – a form of culturally remembering and rethinking things."

Francesco D'Orazio flagged up the dangerous assumption that big data means ‘whole’, just because it’s big. And representative, because it’s whole and big. It's neither of these things!

A useful reminder for us as commercial researchers (and our clients): Remember that we're not looking at any direct or complete access to public opinion. What we see is what people have chosen to represent in public. That's quite a different thing and affects the conclusions we can draw.

5. “Selfies are arguably the ultimate devalued photograph”

[caption id="" align="alignnone" width="736"] Anne Burns at 'Picturing The Social' (Photo: Rebecca Lupton)[/caption]

Anne Burns at 'Picturing The Social' (Photo: Rebecca Lupton)[/caption]

“We live in an age now where photography rains down on us like sewage from above,” said Grayson Perry on BBC Radio 4. Anne Burns (@AnneLBurns) argued that taking photos of landscapes or food may be equally repetitive – but the selfie is more stigmatised because it’s seen as a feminised practice.

The media and popular discourse desscribes selfies as immature, degenerate, selfish, lazy, socially awkward, narcissistic, and insecure. These aren't seen just as qualities of the media form itself - instead they're transferred to the selfie-taker him- or more usually herself. Criticism of the selfie – for example men's disdain of the ‘duckface’ pose – becomes a means of shaming and disciplining others to enforce social norms and control.

Yet once selfies are taken by socially valued subjects (astronauts, celebrities, Leica owners), the selfie is magically redeemed..

(NB the Selfie City project found that yes, women post 80% of all selfies in Moscow - yet among the over-40s, men post more selfies than women. As ever, reality is more complex than the social stereotypes allow.)

Food for thought for brands here, who've been pushing selfie marketing campaigns hard in the last couple of years. How'd you build a campaign that doesn't get your customer slagged off by her friends for being vain, a band-wagon jumper, just out for attention, & so on?

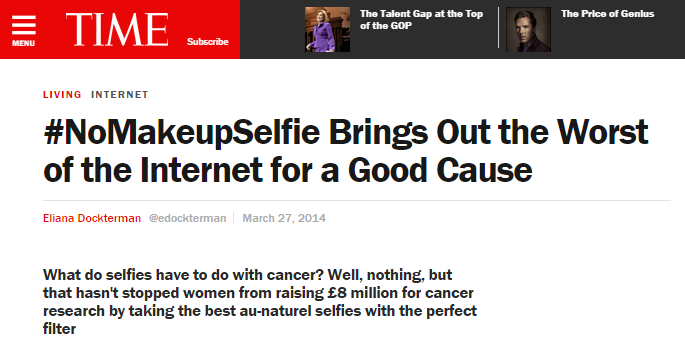

6. Comparing the #NoMakeupSelfie and #ThumbsUpforStephen campaigns

[caption id="attachment_786" align="aligncenter" width="685"] #nomakeupselfie criticism in Time magazine[/caption]

#nomakeupselfie criticism in Time magazine[/caption]

Ruth A. Deller (@ruthdeller)'s presentation looked at exactly this issue: two charity campaigns that used selfies to spread their message, but got very different public receptions. As you may remember, #NoMakeupSelfie drove extensive commentary on the rights and wrongs of the campaign:

- Showing off vs being charitable

- Should women wear makeup? Is it wrong to say not wearing makeup is radical?

- Criticism of how individual women (especially celebrities) looked in their photos

These rest on wider British cultural contradictions about how women are supposed to look - the competing pressures to look nice, not be vain, and definitely never admit that you might look good. Further, as a genuinely grass-roots campaign (started by single mum Fiona Cunningham, who was inspired to post in support of Kim Novak being photographed without makeup on) there was no central authority driving a clear narrative about what these selfies were supposed to mean. As a result, #NoMakeupSelfie was contested and drove as much criticism of participants as it did praise.

By contrast, the #ThumbsUpForStephen campaign didn't drive any of this moral debate - the public narrative was simply, "This is a young guy, doing a good thing, let’s support him". Without the gendered beauty dimension (and muted by the fact that Stephen was dying - perhaps there is some decency left online), the tone of discussion was far more positive.

7. What do the machines see? The world according to image recognition APIs

My colleague Francesco D’Orazio (@abc3d) argued that that 1.8 billion images are being shared on social media every day – so text mining is not enough to understand what’s going on. We need to be able to analyse images properly at this mass scale. But you can’t just look at all of them – and superimposing them into one mega-image isn’t going to make any sense. So you need algorithmic analysis alongside qualitative research.

But are the algorithms any good? Fran walked us through a number of analytics APIs like Alchemy Vision and Imagga to demonstrate what could currently be done. Now it's not qual. And it's not always accurate (the APIs couldn't tell Boris Johnson's yawn from his surprised face). But they demonstrated that automated analysis can get a lot further than many people realised - not just "That's a photo of a man", but estimates of his mood and social status.

While fascinating to see this analysis in action, we've got to remember the risks too. Algorithms may be impersonal but they're not objective. Understanding the massive biases of these algorithms, and how their results can entrench inequalities, is work that tech sociologists are already starting to do (e.g. Syreeta McFadden, Kate Crawford) . But criticism alone won't change much. I'd like to see people working with the analytics firms to improve the algorithms, catching issues that programmers might not see, and finding ways to document & disclose the software's built-in assumptions.

For example, the APIs Fran demonstrated seemed to be reading a lot from Boris Johnson’s suit and tie. Could it recognise a woman as a senior executive without those cues? Or might these algorithms end up unintentionally but systematically under-representing powerful women?

Find out more

We'll share Fran's presentation when he's got it up on Slideshare. Meanwhile...

- Photos are up on Pinterest - that's a first for a conference! Thanks Rebecca Lupton for documenting.

- Download the conference guide here, with abstracts for all the talks

- Audio is available on Soundcloud , if you fancy Fran, Farida and all the rest as a podcast for your commute

- Presentations will make it to Slideshare, but aren't there yet...

- Follow the Visual Social media lab on their website and Twitter @VisSocMediaLab.

- Read back on the conference hashtag #VisSocMedConf

Many thanks to Farida and the Visual Social Media Lab team for organising this great event - fascinating, friendly, and a great kick-off showing where the Lab's work is going. Fran and I will be sharing more from every step of the way, so stay tuned.

Get the latest Pulsar and social media news from us over on Twitter at @Pulsar_Social, or contact me at [email protected]